Reclaim The Net is funded by the community. If you support free speech and restoring privacy and civil liberties, please become a supporter here. Thank you.

|

Thirty years ago, Congress forced every phone company in America to build a surveillance backdoor into its network.

Last year, a foreign government walked right through it, and what they accessed is worse than anything that's been publicly reported in the headlines.

But the real story isn't the hack. It's what happened next: who lobbied whom, which rules got quietly killed, and how the government's supposed "fix" has nothing to do with security and everything to do with protecting the companies whose negligence made the breach possible.

Today, we follow the money, pull the timelines apart, and found a pattern that keeps repeating, one that's about to repeat again with your private messages...

|

|

Elon Musk's AI company filed a federal lawsuit on Thursday asking a judge to block Colorado from enforcing a law that would let the state dictate what Grok can and cannot say.

The complaint, lodged in the US District Court for the District of Colorado against Attorney General Philip Weiser, calls Senate Bill 24-205 unconstitutional on First Amendment, Dormant Commerce Clause, and Equal Protection grounds. xAI wants the whole thing thrown out before it takes effect on June 30.

We obtained a copy of the lawsuit for you here.

SB 24-205 defines "algorithmic discrimination" as any AI output that results in "unlawful differential treatment or impact that disfavors an individual or group" based on protected characteristics.

But the law then carves out an exemption for discrimination designed to "increase diversity or redress historical discrimination." The state, in other words, built a law that bans one kind of differential treatment while explicitly blessing another. The distinction rests entirely on whether Colorado approves of the reason.

That's a content and viewpoint distinction written into statute. xAI's complaint argues the law "compels Plaintiff xAI to alter Grok, forcing Grok's output on certain State-selected subjects to conform to a controversial, highly politicized viewpoint."

The company calls the measure "an effort to embed the State's preferred views into the very fabric of AI systems" and says it would force developers "to distort their AI models to seek and output progressive ideology instead of the truth."

The constitutional argument about the bill itself has real weight. A law that regulates AI outputs based on whether the resulting discrimination serves the state's preferred goals is the kind of viewpoint-based speech regulation that courts subject to the highest level of scrutiny.

The law's scope is enormous. A "high-risk" AI system is defined as one that "makes, or is a substantial factor in making, a consequential decision" in areas including employment, housing, education, healthcare, and financial services.

The definition of "substantial factor" is breathtakingly broad, covering "any use of an artificial intelligence system to generate any content, decision, prediction, or recommendation concerning a consumer that is used as a basis to make a consequential decision."

If someone uses Grok to draft interview questions or summarize a stack of CVs, that's enough. The AI doesn't have to make the hiring call itself. It just has to touch the process.

And the law applies wherever a single Colorado resident might be affected. xAI is incorporated in Nevada, headquartered in California, and has no offices in Colorado. The complaint argues that SB 24-205 regulates development and deployment activities that happen entirely outside the state, in violation of the Dormant Commerce Clause.

Colorado's own political leadership hasn't been able to settle on whether this law is a good idea. Governor Jared Polis signed it in May 2024 "with reservations," warning that "Government regulation that is applied at the state level in a patchwork across the country can have the effect to hamper innovation and deter competition in an open market."

Polis flagged something specific for the speech analysis. He noted that "[l]aws that seek to prevent discrimination generally focus on prohibiting intentional discriminatory conduct," but SB 24-205 "deviates from that practice by regulating the results of AI system use, regardless of intent."

That's a significant admission from the person who signed the bill into law. The measure creates liability not for intentional bias but for statistical outcomes, and only the outcomes Colorado doesn't like.

Attorney General Weiser himself called the bill "really problematic" in August 2025 and said it "needs to be fixed."

A joint letter in May 2025, signed by Polis, Weiser, two US representatives, a US senator, and Denver's mayor, asked the state legislature to delay the law until January 2027 so they could fix the problems.

The legislature instead just pushed the effective date to June 30, 2026, and left everything else untouched.

A March 2026 working group proposal to strip the algorithmic discrimination requirement hasn't been introduced as a bill by any legislator. So the law stands as written.

The First Amendment argument has multiple layers. xAI argues that every choice a developer makes when building an AI model is an expressive activity, from selecting training data to writing system prompts to calibrating guardrails.

The complaint cites the Supreme Court's 2024 decision in Moody v. NetChoice, which held that social media platforms engage in speech when curating content, and quotes the Court's observation that on "the spectrum of dangers to free expression, there are few greater than allowing the government to change the speech of private actors in order to achieve its own conception of speech nirvana."

The complaint also contends SB 24-205 burdens users' right to receive information.

The argument is straightforward enough. If the law forces developers to alter model outputs so they don't produce disfavored statistical patterns, then users get sanitised answers instead of whatever the model would have generated without state interference.

You don't have to agree with everything xAI does to recognize that a state compelling a specific ideological adjustment to AI training data is a genuinely alarming precedent for speech.

There's a vagueness problem, too. SB 24-205 prohibits "algorithmic discrimination" and exempts discrimination that redresses "historical discrimination," but never defines "historical discrimination."

It leaves that to the Attorney General to figure out through rulemaking. The law creates a $20,000-per-violation penalty for noncompliance, gives the AG exclusive enforcement authority, and has no private right of action. That concentration of definitional and enforcement power in one office, combined with terms that nobody can pin down, is the kind of arrangement that chills speech even before a single enforcement action.

|

If this coverage matters to you, please become a paid supporter today. The threats to privacy and free speech are only growing, and so is the work required to oppose them. Your support is what makes that possible.

|

|

Massachusetts just voted to force every social media user in the state to prove their age to a tech company.

The bill passed the House 129-25 on Wednesday, banning children under 14 from social media entirely, requiring parental consent for 14- and 15-year-olds, and mandating that platforms build age verification systems to enforce all of it. If it becomes law, the policy takes effect on October 1.

We obtained a copy of the bill for you here.

House Speaker Ron Mariano and Ways and Means Chair Aaron Michlewitz framed the legislation as protection. "This ban would be among the most restrictive in the entire country, helping to protect young people from harmful content and addictive algorithms that have a proven negative impact on their mental health," they said in a joint statement.

They also described the broader goal: "The simple reality is that Massachusetts must do more to ensure that our laws keep pace with modern challenges – especially when it comes to protecting our children, and to setting students up for success in the classroom and beyond."

The bill doesn't say how companies should verify ages. It leaves that to Attorney General Andrea Campbell, who would have until September 1 to write the implementing regulations.

That vagueness is deliberate, according to Michlewitz, who said it gives the AG flexibility in a changing industry.

But the practical reality of age verification is that someone has to prove who they are.

That means government IDs, facial scans, or behavioral tracking, and those requirements don't just apply to kids. Every user on the platform has to go through the system, because you can't filter minors without checking adults, too.

We already know how this plays out. When Discord rolled out age verification for UK and Australian users, a third-party vendor handling the ID checks was breached within months. Approximately 70,000 users had their government-ID photos exposed, according to Discord's own disclosure. Hackers posted photos of people holding government ID cards to a Telegram channel, alongside names, email addresses, and partial financial data. Discord's response to that breach was to announce global mandatory age verification.

Massachusetts lawmakers seem unbothered. "We know that there could be some potential legal challenges," Michlewitz said. "We think it's the right thing to do, we think we're on solid ground."

Asked directly whether data privacy came up during the drafting process, Mariano gave an answer that says a lot about how seriously the legislature weighed the surveillance costs: "Well, I'm sure we have, but the issue is that we're doing it to protect kids, and a lot of it is aimed at an age group that we think is well worth the investment of time in getting the right ages and making sure that only kids who are maturing are involved in this."

That response sidesteps the central problem. Age verification doesn't only collect data about kids. It collects identity data from everyone, and that data has to go somewhere.

It gets stored by third-party vendors, processed by facial recognition algorithms, and retained for periods that companies define in their own privacy policies.

|

Getting merchandise for yourself or as a gift helps support the mission to defend free speech and digital privacy.

It also helps raise awareness every time you wear or use it.

Your merch purchase goes directly toward sustaining our work and growing our reach.

It's a simple, effective way to support. Get yours now.

|

|

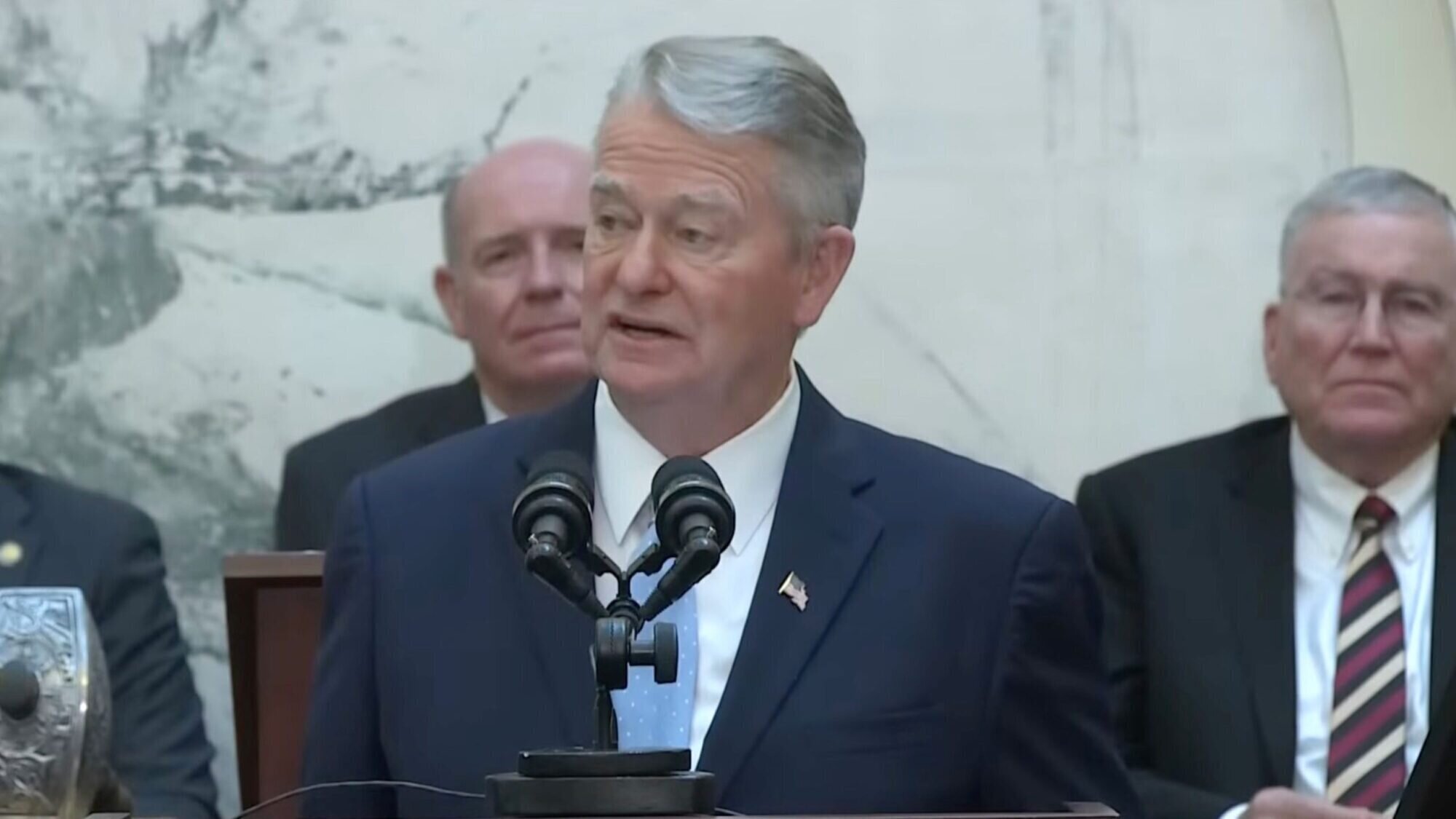

Idaho just became one of the few states to draw a line against mandatory digital identification. Governor Brad Little signed Senate Bill 1299 on April 1, 2026, and the new law does something genuinely unusual in American state politics right now: it pushes back against digital ID rather than pushing it forward.

We obtained a copy of the bill for you here.

The bill creates Section 67-2364 of the Idaho Code, prohibiting government entities from requiring "any person to obtain, maintain, present, or use digital identification."

Approximately three-quarters of US states are currently offering or developing electronic driver's licenses. The national momentum is clearly toward digital ID systems, with states like Arkansas, Texas, Georgia, and Utah all advancing their own versions in 2025 alone. Idaho is swimming against that current.

The bill, introduced by Senator Tammy Nichols, goes further than a simple opt-out. It prohibits public entities from denying, delaying, conditioning, or reducing "any service, benefit, license, employment, education, or access based on a person's refusal or inability to use digital identification."

That second clause, "or inability," protects people who can't use digital ID, not just those who won't. Anyone without a smartphone, without reliable internet, without the technical literacy to navigate a digital wallet, keeps full access to government services. Physical, non-digital identification remains "valid for all governmental purposes" under the law.

The bill also addresses what happens when someone voluntarily shows a digital ID during a government interaction. A government entity cannot "require a person to surrender, unlock, or relinquish control of a personal electronic device for identity verification." Handing your phone to a police officer or a clerk at the DMV is not the same as handing them a laminated card.

A phone contains your messages, your photos, your browsing history, and your location data. Presenting a digital ID "shall not constitute consent to search or access any other contents of a device."

That's a Fourth Amendment protection written directly into a state statute.

Government agencies are also barred from using digital ID as a surveillance tool. The law prohibits agencies from tracking individuals, retaining identity data beyond a single transaction, or using digital identification "as a universal or shared credential across agencies."

That last restriction is particularly significant. It blocks the creation of a de facto digital identity system where a single credential follows you from the tax office to the library to the health department, linking every interaction into a unified government profile.

The bill didn't survive the legislative process unscathed. A Senate amendment removed the original provision stating that "information incidentally observed on a device shall not be used to establish probable cause or justification for further search or seizure." That was a strong protection against the kind of casual surveillance that happens when a government employee glances at your phone screen while checking your ID. Its removal is a real loss.

The amendment also weakened enforcement. The original bill provided statutory damages of $500 to $2,500 and civil penalties up to $5,000 against government entities that violated the law. The amended version strips those out.

Enforcement now rests with the attorney general, who must give a public entity 15 days' written notice to fix a violation before taking any action.

Citizens can still seek declaratory or injunctive relief, and prevailing plaintiffs get attorney's fees, but the direct financial penalties that would have made agencies think twice are gone.

The amendment also added a provision stating that "no public employee shall be personally liable for actions taken within the employee's scope of employment," which removes individual accountability almost entirely.

Idaho's own legislature considered HB 542 this same session, a bill that would have compelled platforms to continuously track, estimate, and verify the identities of all users, including minors. The fact that both bills moved through the same legislature in the same session tells you something about the competing pressures these lawmakers face.

The broader context is hard to ignore. Digital ID systems are convenient, and convenience is how surveillance expands.

You start with a voluntary app, then agencies quietly stop supporting the physical alternative, then you can't renew your vehicle registration or pick up a prescription without pulling out your phone and authenticating through a system that logs when you were there, what you needed, and which device you used. Idaho's law is designed to prevent exactly that slow erosion.

Whether the weakened enforcement provisions give it enough teeth to actually do so is another question.

|

|

Federal prosecutors have ordered Reddit to appear before a grand jury in Washington, D.C., and hand over the personal data of an anonymous user who posted criticism of Immigration and Customs Enforcement. The company has until April 14 to comply. Reddit has declined to say whether it plans to fight the order.

The user, identified in court filings as John Doe, is a US citizen in the Pacific Northwest. Doe’s attorneys reviewed the account's post history and found nothing resembling criminal activity.

The most aggressive posts they could locate: sharing already-public biographical details about Jonathan Ross, the ICE agent who killed Renee Good in Minneapolis in January; suggesting "Urine speaks louder than words" as an anti-ICE protest sign (a reference to a song); and writing "TSA sucks and we all know it."

The First Attempt

It started on March 4, when an ICE agent in Fairfax, Virginia, sent Reddit an administrative summons demanding the user's name, address, phone number, banking and credit card information, IP addresses, phone model numbers, and the names of any other accounts tied to their Reddit profile.

The legal basis cited for this demand was a provision of the Smoot-Hawley Tariff Act of 1930, a statute that governs customs duties, boat show sales, wild animal imports, and forfeited wines and spirits.

The summons included a threat and a gag order. "Failure to comply with this summons will render you liable to proceedings in a U.S. District Court to enforce compliance with this summons as well as other sanctions," it read.

"You are requested not to disclose the existence of this summons for an indefinite period of time. Any such disclosure will impede the investigation and thereby interfere with the enforcement of federal law."

Reddit notified John Doe two days later. Doe retained lawyers, and on March 12 they filed a motion to quash the summons in the Northern California federal court.

We obtained a copy of the motion for you here.

The motion pointed out the obvious. John Doe is a US citizen who has never traveled outside the country, has no business dealings overseas, has not imported or exported anything, and primarily uses their Reddit account to talk about local politics in Oregon. Nothing about the account, the user, or any of their posts has the faintest connection to customs duties or international trade.

Faced with a legal challenge, the government withdrew its request.

Four Days Later

The withdrawal came on March 27. By March 31, Reddit received a new order. This time the demand came not from a field agent in Virginia but from a Special Assistant US Attorney in Washington, D.C., where the US Attorney's office is led by Jeanine Pirro, the former judge and Fox News host, confirmed to the role in August 2025. The new subpoena ordered Reddit itself to appear before a grand jury, and it sought roughly three times more data than the original request.

The change from an administrative summons to a grand jury subpoena is significant. An administrative summons can be challenged in open court, as John Doe's lawyers demonstrated. A grand jury operates in secret. The proceedings are not adversarial. There is no lawyer for the other side. There is no public record. The purpose of the proceeding is to let a prosecutor build a case toward criminal charges, and the person being investigated has almost no ability to contest what happens behind closed doors.

There is no known precedent in the current wave of immigration-related social media investigations for summoning a major tech company before a grand jury. The move represents a significant escalation, and it is worth understanding why. In a grand jury proceeding, First Amendment protections are at their weakest. The target of the investigation has almost no ability to assert their rights before the damage is done. By the time the secrecy lifts, if it ever does, the government already has what it wants.

Why Washington

The reason the government moved the case to DC after losing in California is not hard to figure out. Courts in the Northern District of California had repeatedly blocked ICE's attempts to unmask anonymous social media users.

Last fall, a federal magistrate judge ordered Meta not to hand over the information ICE sought about an anonymous Instagram user. The same legal team representing John Doe had intervened on the user's behalf and won.

The pattern held across multiple cases. The government would issue a subpoena, a challenge would be filed, and the government would fold. The grand jury route sidesteps that pattern entirely. It takes the question out of an open courtroom and puts it behind closed doors, in a jurisdiction of the government's choosing, under rules that overwhelmingly favor the prosecution.

None of the records associated with this grand jury will be accessible to the public. The government lost when it had to make its case in the open. So it stopped making its case in the open.

The Broader Campaign

Reddit's own transparency data reflects the pressure. The first half of 2025 marked the highest volume of law enforcement data requests the company has ever received in a single reporting period: 1,179 requests, including 423 subpoenas and 27 court orders. Sixty-six percent came from US agencies. Reddit disclosed user data in 82 percent of those cases.

Washington, D.C., is the district from which Reddit receives the most federal law enforcement requests.

Reddit's public statement on the John Doe case says the right things.

"Privacy is central to how Reddit operates, and we take our commitment to protecting that seriously," the company said. "We do not voluntarily share information with any government, especially not on users exercising their rights to criticize the government or plan a protest."

The company says it reviews requests for "legal sufficiency," objects to overbroad demands, notifies users "whenever possible," and provides only the "minimum" data required.

An 82 percent compliance rate with law enforcement requests is worth keeping in mind while reading those assurances.

What This Means

The legal question here is narrow. The practical question is not.

A US citizen posted criticism of a federal agency on the internet using a pseudonymous account. They shared biographical details about an ICE agent that were already public. They suggested a crude joke for a protest sign. They said TSA is bad.

For this, the federal government issued a summons backed by a 1930 tariff law that has nothing to do with Reddit posts.

When that was challenged in court, the government withdrew. Four days later, it came back with a grand jury subpoena, moved the proceedings to a different jurisdiction, expanded the scope of the data request, and wrapped the entire thing in secrecy.

The point is not just to identify one Reddit user. Every person who reads about this case and decides not to post something critical, not to share information about federal enforcement, not to make a joke at the government's expense, every one of those decisions is the policy working as designed.

The Digital ID Agenda

The push to mandate online age verification is building the infrastructure that will make cases like John Doe's unnecessary. A dozen "child online safety" bills are advancing through Congress with bipartisan support, and half of US states have already enacted laws requiring government ID submission, biometric facial scans, or third-party verification before users can access certain websites.

The justification is protecting children. The consequence is eliminating anonymity for everyone. There is no way to reliably verify that a user is 16 without verifying who they are. Every age-check system that actually works requires collecting identifying information, whether that means scanning a passport, submitting a credit card, or handing biometric data to a third-party vendor. Once a platform has linked a user's legal identity to their account, that identity can be subpoenaed, hacked, or handed over to law enforcement.

The anonymous Reddit user who posts about local politics in Oregon ceases to exist. Meta's Mark Zuckerberg has told a court that Apple and Google should verify the identity of every smartphone user at the operating system level, a proposal that would end anonymous internet access at the root.

Consider what that world would mean in the context of the John Doe case. Right now, the government has to convene a secret grand jury and drag a tech company to Washington just to find out who posted "TSA sucks and we all know it." That process is slow, legally fraught, and publicly embarrassing when it leaks. Digital ID systems would eliminate the need for any of it. The identity would already be on file, pre-collected, waiting for the next subpoena. The surveillance would be baked into the platform before the user ever typed a word.

|

If this coverage matters to you, please become a paid supporter today. The threats to privacy and free speech are only growing, and so is the work required to oppose them. Your support is what makes that possible.

|

|

Thanks for reading,

Reclaim The Net

|

|

|

|

No comments:

Post a Comment